On March 31, 2026, at 6:33 PM local time, a deploy pipeline log printed this line:

Deploy pipeline completed successfully deployment_id=d3ba950b app_id=8942af99 duration_ms=58112Fifty-eight seconds. That is how long it took sh0 -- our self-hosted deployment platform, written in Rust -- to clone a Git repository, analyze the stack, build a Docker image, start a container, pass a health check, configure a reverse proxy, and serve a live website.

The website was flin.sh -- the installation page for FLIN, a programming language we built from scratch.

The website was built entirely with FLIN itself. Not React. Not Next.js. Not any external framework. FLIN, our language, serving its own pages.

And it was hosted on sh0, our PaaS. Not Vercel. Not Railway. Not any external platform. sh0, our platform, managing its own containers.

Three layers of technology. All built by the same two-person team: one human founder and one AI CTO. All running in production. All dogfooding each other.

This is the story of how we got here, what broke along the way, and why dogfooding at this depth changes everything about how you build software.

What Is Double Dogfooding?

Single dogfooding is when you use your own product. Slack uses Slack. GitHub uses GitHub. Linear uses Linear.

Double dogfooding is when the thing you build and the thing you build it with are both your own products. You are simultaneously the vendor and the customer at two levels of the stack.

Here is what our stack looks like:

| Layer | Product | Built With | Deployed On |

|---|---|---|---|

| Language runtime | FLIN | Rust | N/A (compiled binary) |

| Website | flin.sh | FLIN | sh0 |

| Platform | sh0 | Rust + Svelte 5 | Self-hosted (bare metal) |

When something breaks, it could be a bug in the language, a bug in the website code, or a bug in the platform. When everything works, you know all three layers are production-ready -- not because of test coverage numbers, but because real users (starting with you) hit them continuously.

Layer 1: FLIN -- A Language That Remembers

FLIN is a cognitive programming language with a built-in database, reactive UI system, 380+ built-in functions, and zero configuration. You write a .flin file, run flin start ., and you have a full-stack web application.

// This is a complete FLIN web page

page "/" {

<h1>Hello from FLIN</h1>

<p>No framework. No bundler. No config.</p>

}FLIN compiles to a single binary (Rust underneath). It includes its own HTTP server, template engine, i18n system, and component model. The flin.sh website uses all of these features: 5 routes, multi-language support (English, French, Spanish), a component library, shared layouts, and static asset serving.

The dogfooding badge at the bottom of every page says it plainly: "This site is 100% built with FLIN v1.0.0-alpha.2."

Layer 2: sh0 -- A PaaS That Deploys Anything

sh0 is a self-hosted deployment platform. Single Rust binary. Embedded Svelte 5 dashboard. Manages Docker containers, generates Dockerfiles, handles SSL via Caddy, and now supports 20+ runtime stacks including -- as of today -- FLIN.

When you push code or upload a zip to sh0, here is what happens:

- Stack detection -- sh0 scans your project files and identifies the runtime (Node.js, Python, PHP, Go, Rust, FLIN, etc.)

- Dockerfile generation -- if you do not provide a Dockerfile, sh0 generates a production-grade one

- Image build -- Docker builds the image

- Container start -- sh0 creates and starts the container on the

sh0-netnetwork - Health check -- sh0 verifies the container is serving HTTP traffic

- Reverse proxy -- Caddy routes your domain to the container with automatic SSL

- Blue-green swap -- the old container is stopped only after the new one passes health checks

All of this happens in under 60 seconds for most stacks.

The Day Everything Broke (Before It All Worked)

The path to this moment was not smooth. This session started with a bug report:

ERROR sh0_api::deploy::pipeline: Deploy pipeline failed

error=Container health check timed out after 60sA simple PHP app. A simple HTML site. Both failed to deploy. Both containers were running perfectly -- you could open them in Docker Desktop and browse them -- but sh0 reported them as failed.

We found four bugs stacked on top of each other:

Bug 1: PHP Containers Crashed on Startup

sh0 forces all generated containers to run as a non-root user (uid 1000:1000) for security. But PHP Apache images need root to bind port 80. Every PHP deploy started, Apache tried to bind port 80, failed silently, and exited.

Fix: Added a needs_root() method to the Stack enum. PHP now runs as root. Every other stack still runs non-root.

Bug 2: PHP Dockerfiles Had No Health Checks

Every stack in sh0 -- Node.js, Python, Go, Rust, Java, .NET, Ruby, Static -- had a Docker HEALTHCHECK instruction in its generated Dockerfile. All nine PHP templates were missing it. Without a HEALTHCHECK, sh0 fell back to a TCP probe, which is less reliable.

Fix: Added HEALTHCHECK --interval=30s --timeout=5s --start-period=10s --retries=3 CMD curl -f http://localhost:80/ || exit 1 to all nine PHP templates.

Bug 3: TCP Probe Used Unreachable Container IPs

On Docker Desktop (macOS/Windows), containers run inside a Linux VM. The container's internal IP address (172.18.0.x) is unreachable from the host. sh0 was probing 172.18.0.3:80 from macOS -- which always times out.

Fix: Added extract_host_port() to read the mapped host port from Docker's inspect API. Now sh0 probes localhost:49322 (the random host port Docker assigns) instead of the container's internal IP.

Bug 4: Silent Fallback Passed With Zero Validation

When the container IP could not be extracted, the old code waited 5 seconds and returned success -- with no actual health verification. This was a hack that accidentally made some deploys appear to work on Docker Desktop.

Fix: Removed the silent fallback. If the IP cannot be extracted, keep polling until it appears or timeout properly.

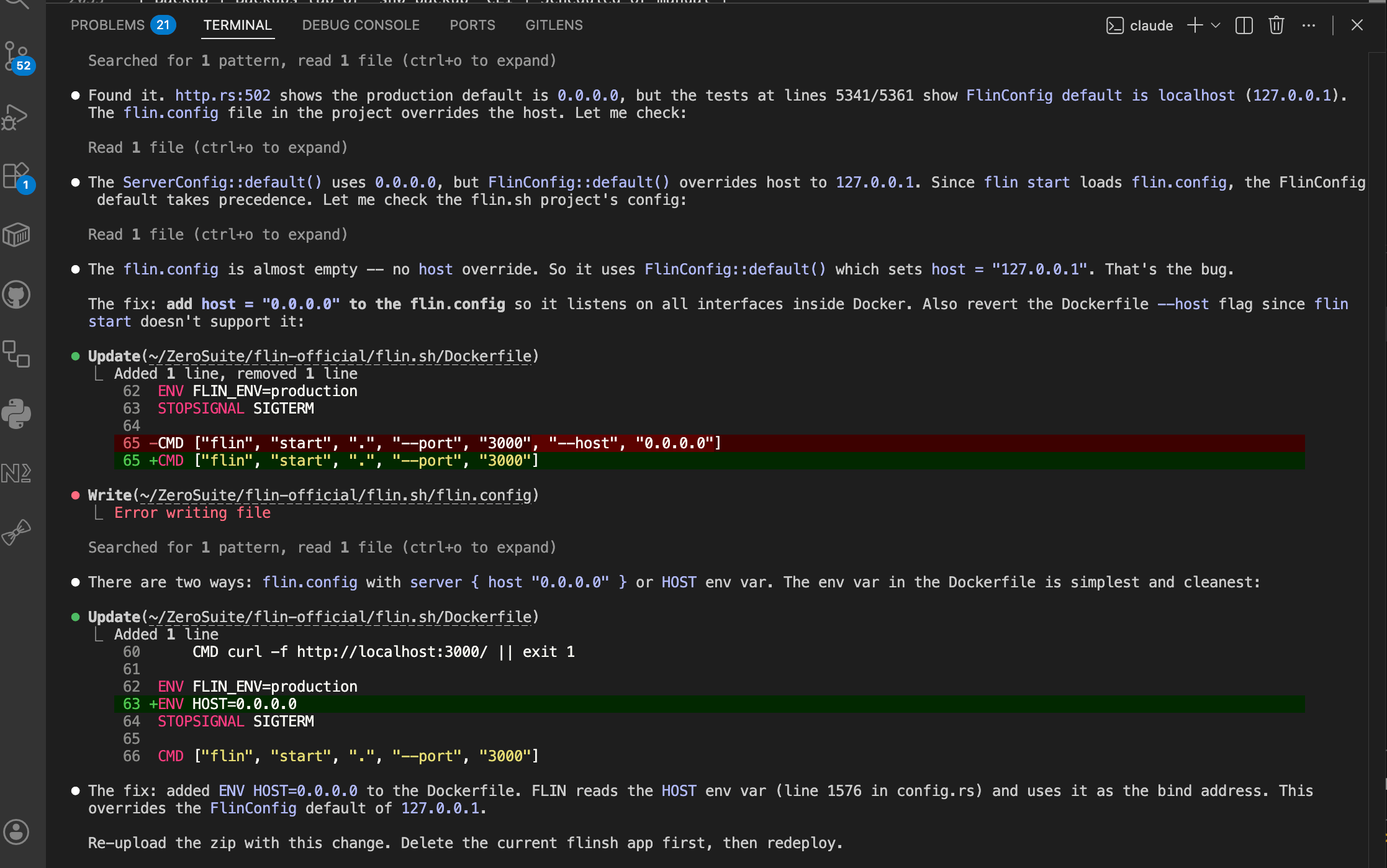

Bonus Bug: FLIN Bound to Localhost Inside Docker

After fixing all four bugs, FLIN deployed successfully -- but the website returned "page unavailable." FLIN's default configuration binds to 127.0.0.1 (localhost only). Inside a Docker container, that means only processes inside the container can reach it. Docker's port forwarding connects to the container's network interface, not to its localhost.

Fix: Added ENV HOST=0.0.0.0 to the FLIN Dockerfile so it listens on all interfaces.

Bonus Bug 2: Health Check Timeout Too Short

FLIN's cold start takes approximately 6 seconds per request (template compilation on first hit). The Dockerfile's HEALTHCHECK had a 3-second timeout. Every health check timed out before FLIN finished responding.

Fix: Increased HEALTHCHECK timeout from 3s to 10s, start-period from 5s to 15s. Increased sh0's global health check timeout from 60s to 180s.

Six Bugs, One Session, Zero Remaining Issues

Here is the remarkable part: all six bugs were found and fixed in a single session. Not through test suites (though all 554 existing tests continued to pass). Through dogfooding.

The act of deploying our own product on our own platform -- under real conditions, with real Docker Desktop quirks, with real cold-start behavior -- exposed issues that no amount of unit testing would have caught:

- Non-root containers failing on port 80? That is an integration issue between Dockerfile generation and container runtime configuration.

- TCP probes failing on Docker Desktop? That is a platform-specific networking issue invisible on Linux CI.

- FLIN binding to localhost? That is an application default that only matters inside containers.

- Health check timeout too short? That is an interaction between the app's cold-start time and the platform's expectations.

Each of these bugs lived at the boundary between two systems. Unit tests live inside one system. Dogfooding lives at the boundary.

What FLIN Stack Detection Looks Like

As of today, sh0 natively supports FLIN applications. Here is how it works:

Detection: sh0 looks for flin.config or .flin files in the app/ directory.

Dockerfile generation: When a FLIN app has no Dockerfile, sh0 auto-generates one:

dockerfileFROM debian:bookworm-slim

WORKDIR /app

RUN apt-get update && apt-get install -y ca-certificates curl \

&& rm -rf /var/lib/apt/lists/* \

&& useradd -m -u 1000 flin

RUN curl -fsSL "https://github.com/flin-lang/flin/releases/latest/download/flin-linux-x64.tar.gz" \

-o /tmp/flin.tar.gz \

&& tar -xzf /tmp/flin.tar.gz -C /usr/local/bin/ \

&& chmod +x /usr/local/bin/flin \

&& rm /tmp/flin.tar.gz

COPY . .

RUN chown -R flin:flin /app

USER flin

EXPOSE 3000

ENV FLIN_ENV=production

ENV HOST=0.0.0.0

HEALTHCHECK --interval=10s --timeout=10s --start-period=15s --retries=3 \

CMD curl -f http://localhost:3000/ || exit 1

CMD ["flin", "start", ".", "--port", "3000"]Downloads the latest FLIN binary from GitHub Releases. Runs as non-root. Health check with generous timeouts for cold start. Binds to all interfaces. Production mode.

A developer can now git push a FLIN project to sh0, and it deploys automatically. No Dockerfile required.

The Numbers

| Metric | Value |

|---|---|

| sh0 Rust codebase | ~30,000 lines across 10 crates |

| sh0 Dashboard | Svelte 5 SPA, embedded in binary |

| FLIN compiler | Rust, single binary |

| flin.sh website | 5 routes, 3 languages, 0 npm dependencies |

| Deploy time (flin.sh on sh0) | 58 seconds (clone + build + health check + route) |

| Health check pass | ~14 seconds after container start |

| Bugs found today | 6 |

| Bugs remaining | 0 |

| Tests passing | 554 / 554 |

| External dependencies for hosting | 0 (Docker + Caddy, both managed by sh0) |

Why This Matters

There is a moment in every product's life where it graduates from "demo" to "real." That moment is not when you write documentation. It is not when you add a pricing page. It is not even when you get your first customer.

It is when you trust it enough to run your own business on it.

Today, ZeroSuite runs: - flin.sh (FLIN installation page) -- built with FLIN, hosted on sh0 - flin.dev (FLIN documentation) -- built with FLIN, hosted on Easypanel (soon sh0) - sh0.dev (sh0 marketing site) -- SvelteKit, hosted on Easypanel - Multiple test applications (PHP, HTML, Node.js, Svelte) -- all on sh0

We are not telling developers "trust our platform." We are showing them that we already do.

And when something breaks -- a health check timeout, a port binding issue, a cold-start race condition -- we feel it before any customer does. We fix it before any customer reports it. That is the power of dogfooding at every layer of the stack.

What Comes Next

This milestone unlocks several things:

- FLIN apps in the sh0 Deploy Hub -- developers can now deploy FLIN applications with one click, alongside 183 other deployment options

- flin.dev migration to sh0 -- moving the documentation site from Easypanel to sh0

- sh0 demo server -- the public demo at demo.sh0.app will showcase sh0 self hosted platform

- FLIN 1.0 launch -- 79 days until v1.0, now with a fully dogfooded deployment story

The double dogfooding cycle is now self-sustaining. Every improvement to FLIN makes flin.sh better. Every improvement to sh0 makes FLIN deployments smoother. Every bug found in one product strengthens the other.

This is what it looks like when you build your entire stack yourself: painful at first, but compounding forever.

March 31, 2026. A deploy log, a website, a language, and a platform -- all ours, all running, all talking to each other. This is the day we stopped being three separate projects and became one system.